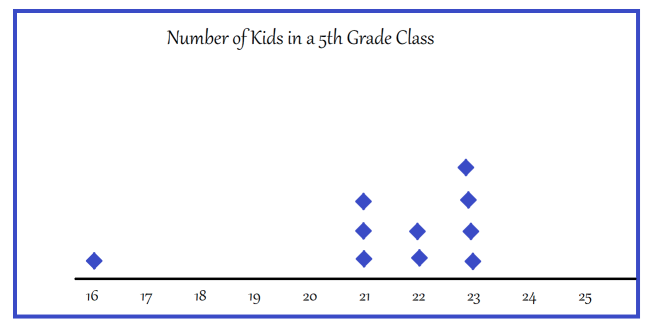

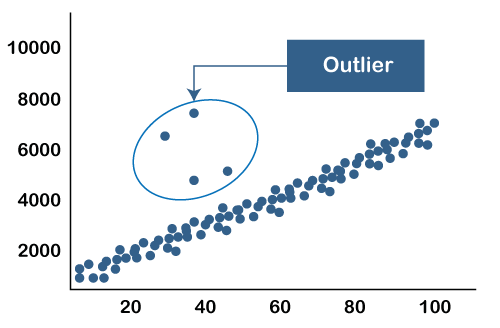

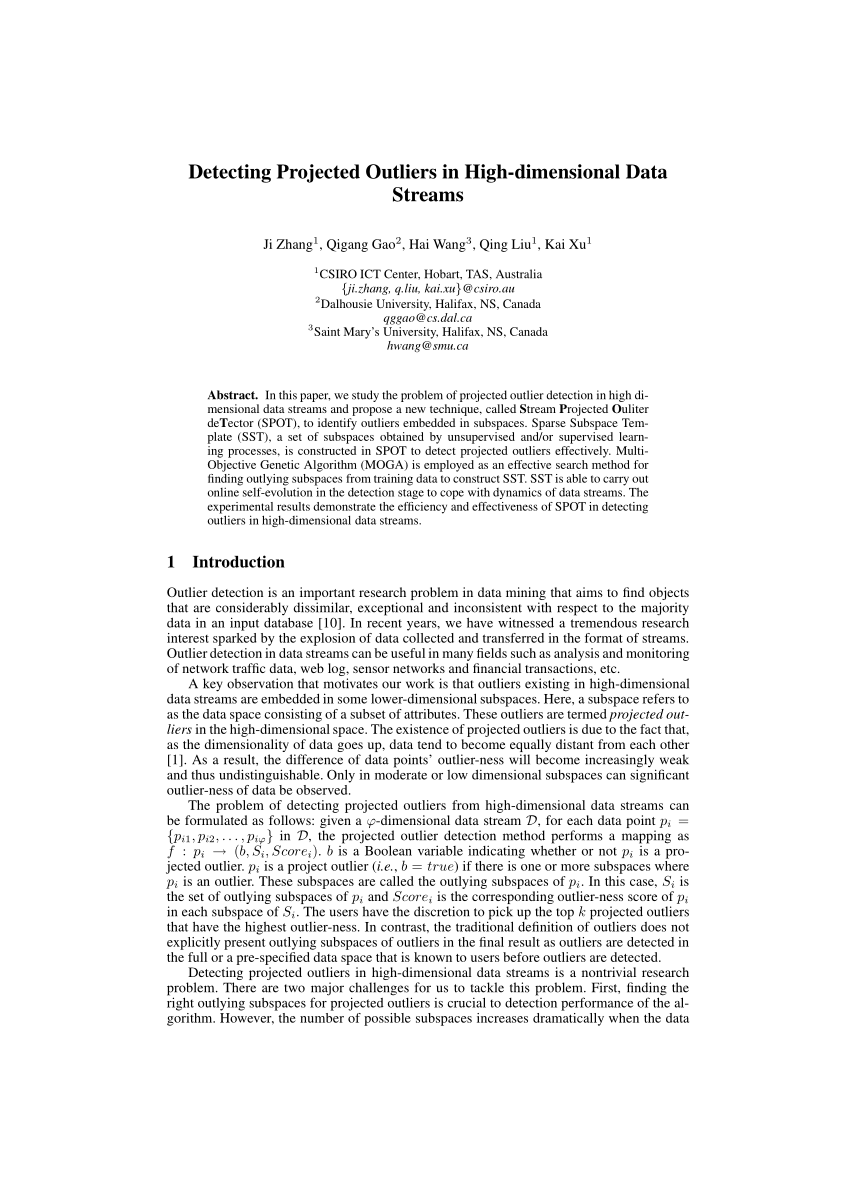

The big advantage of PCA-Grid is that it can perform both robust estimation and hard variable selection (in the sense of giving exactly 0 weight to a subset of the variables) see here for a link and the sPCAgrid() function in the pcaPP R package. The big advantage of ROBPCA is its computational efficiency in high dimensions. In order to compute these weights, we find the k nearest neighbors of each point in a fast. The numerical complexity of the OGK in $p$ is $\mathcal(nk^3)$ where $k$ is the number of components one wishes to retains (which in high dimensional data is often much smaller than $p$). Outliers are those points having the largest values of weight. The recommended solution depends on what you mean by 'high dimensional'.ĭenoting $p$ the number of variables and $n$ the number of observations,īroadly, for $10\leq p \leq 100$, the current state of the art approach for outlier detection in high dimensional setting is the OGK algorithm of which many implementations exists. 4.(classical) Mahalanobis distances cannot be used to find outliers in data because the Mahalanobis distance themselves are sensitive to outliers (i.e., they will always by construction sum to $(n-1)\times p$, the product of the dimensions of your dataset). They approximate the nearest neighbors quickly and efficiently. In high-dimensional data, outlying behaviour of data points can only be detected in the locally relevant subsets of data dimensions. Random projection forests (rpForests) perform fast approximate clustering. Abstract Detecting outliers in high-dimensional data is a challenging problem. So, running the k-NN algorithm generates the same result at a much lower cost. Therefore, the mapping preserves the Euclidean distances. Random projections allow us to transform m points from a high-dimensional space into only dimensions by changing the distance between any two points by a factor of ( ). The use of hash tables is easy and efficient, both for querying and data generation. This way, the LSH method is used to find approximate nearest neighbors fast. We expect similar items to end up in the same buckets at the end, as the name suggests. Locality sensitive hashing (LSH) algorithm hashes similar items into high-probability buckets. High-dimensional similarity search techniques give a fast approximation for k-NN in a high-dimensional setting. So answers involving robust regression or computing leverage are not helpful. I am not thinking of a regression problem, but of true multivariate data. If searching for the exact solution becomes too costly, we can adopt an approximation algorithm without guaranteeing the exact result. How can I find the outliers Pairwise scatterplots won't work as it is possible for an outlier to exist in 3 dimensions that is not an outlier in any of the 2 dimensional subspaces. In some cases, finding an approximate solution is acceptable. When dealing with Outliers, it is relatively straightforward to find outliers in a uni-dimensional setting where we could do a box plot and find potential. We can formulate the side’s length to be: If we increment f to =3, to cover 1/100 of the volume, we need a cube with a side’s length=0.63.Īs we keep incrementing, a side’s length grows exponentially. In this setting, let’s find out the length of a side of a cube containing =10 data points.įor example, if =2, a square of 0.1 0.1 covers 1/100 of the total area, covering 10 data points in total. Let’s further assume that each feature’s values are uniformly distributed. We fix to 10 and (number of observations) to 1000. Let’s consider a simple setting where our dataset has number of features, and each feature has a value in the range. This tool identifies statistically significant spatial clusters of high values (hot spots) and low values (cold spots) as well as high and low outliers within. Moreover, the total area we need to cover to find neighbors increase. Our assumption of similar points being situated closely breaksĪs the number of features increases, the distance between data points becomes less distinctive.

It becomes computationally more expensive to compute distance and find the nearest neighbors in high-dimensional space.Because, in high-dimensional spaces, the k-NN algorithm faces two difficulties:

Hence, it’s affected by the curse of dimensionality. K-NN algorithm’s performance gets worse as the number of features increases.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed